Activity Based Intelligence Principles and Applications

ABI represents a fundamentally different way of doing intelligence analysis, one that is important in its own terms but that also offers the promise of creatively disrupting what is by now a pretty tired paradigm for thinking about the intelligence process.

ABI enables discovery as a core principle. Discovery—how to do it and what it means—is an exciting challenge, one that the intelligence community is only beginning to confront, and so this book is especially timely.

The prevailing intelligence paradigm is still very linear when the world is not: Set requirements, collect against those requirements, then analyze. Or as one wag put it: “Record, write, print, repeat.”

ABI disrupts that linear collection, exploitation, dissemination cycle of intelligence. It is focused on combining data—any data—where it is found. It does not prize data from secret sources but combines unstructured text, geospatial data, and sensor-collected intelligence. It marked an important passage in intelligence fusion and was the first manual evolution of “big data” analysis by real practitioners. ABI’s initial focus on counterterrorism impelled it to develop patterns of life on individuals by correlating their activities, or events and transactions in time and space.

ABI is based on four fundamental pillars that are distinctly different from other intelligence methods. The first is georeference to discover. Sometimes the only thing data has in common is time and location, but that can be enough to enable discovery of important correlations, not just reporting what happened. The second is sequence neutrality: We may find a critical puzzle piece before we know there is a puzzle. Think how often that occurs in daily life, when you don’t really realize you were puzzled by something until you see the answer.

The third principle is data neutrality. Data is data, and there is no bias toward classified secrets. ABI does not prize exquisite data from intelligence sources over other sources the way that the traditional paradigm does. The fourth principle comes full circle to the first: integrate before exploitation. The data is integrated in time and location so it can be discovered, but that integration happens before any analyst turns to the data.

ABI necessarily has pushed advances in dealing with “big data,” enabling technologies that automate manual workflows, thus letting analysts do what they do best. In particular, to be discoverable, the metadata, like time and location, have to be normalized. That requires techniques for filtering metadata and drawing correlations. It also requires new techniques for visualization, especially geospatial visualization, as well as tools for geotemporal pattern analysis. Automated activity extraction increases the volume of georeferenced data available for analysis.

ABI is also enabled by new algorithms for correlation and fusion, including rapidly evolving advanced modelingand machine learning techniques.

ABI came of age in the fight against terror, but it is an intelligence method that can be extended to other problems—especially those that require identifying the bad guys among the good in areas like counternarcotics or maritime domain awareness. Beyond that, ABI’s emphasis on correlation instead of causation can disrupt all-too-comfortable assumptions. Sure, analysts will find lots of spurious correlations, but they will also find intriguing connections in interesting places, not full-blown warnings but, rather, hints about where to look and new connections to explore.

This textbook describes a revolutionary intelligence analysis methodology using approved, open-source, or commercial examples to introduce the student to the basic principles and applications of activity-based intelligence (ABI).

Preface

Writing about a new field, under the best of circumstances, is a difficult endeavor. This is doubly true when writing about the field of intelligence, which by its nature must operate in the shadows, hidden from the public view. Developments in intelligence, particularly in analytic tradecraft, are veiled in secrecy in order to protect sources and methods;

Activity-Based Intelligence: Principles and Applications is aimed at students of intelligence studies, entry-level analysts, technologists, and senior-level policy makers and executives who need a basic primer on this emergent series of methods. This text is authoritative in the sense that it documents, for the first time, an entire series of difficult concepts and processes used by analysts during the wars in Iraq and Afghanistan to great effect. It also summarizes basic enabling techniques, technologies, and methodologies that have become associated with ABI.

1

Introduction and Motivation

By mid 2014, the community was once again at a crossroads: the dawn of the fourth age of intelligence. This era is dominated by diverse threats, increasing change, and increasing rates of change. This change also includes an explosion of information technology and a convergence of telecommunications, location-aware services, and the Internet with the rise of global mobile computing. Tradecraft for intelligence integration and multi-INT dominates the intelligence profession. New analytic methods for “big data” analysis have been implemented to address the tremendous increase in the volume, velocity, and variety of data sources that must be rapidly and confidently integrated to understand increasingly dynamic and complex situations. Decision makers in an era of streaming real-time information are placing increasing demands on intelligence professionals to anticipate what may happen…against an increasing range of threats amidst an era of declining resources. This textbook is an introduction to the methods and techniques for this new age of intelligence. It leverages what we learned in the previous ages and introduces integrative approaches to information exploitation to improve decision advantage against emergent and evolving threats.

Dynamic Change and Diverse Threats

Transnational criminal organizations, terrorist groups, cyberactors, counterfeiters, and drug lords increasingly blend together; multipolar statecraft is being rapidly replaced by groupcraft.

The impact of this dynamism is dramatic. In the Cold War, intelligence focused on a single nation-state threat coming from a known location. During the Global War on Terror, the community aligned against a general class of threat coming from several known locations, albeit with ambiguous tactics and methods. The fourth age is characterized by increasingly asymmetric, unconventional, unpredictable, proliferating threats menacing and penetrating from multiple vectors, even from within. Gaining a strategic advantage against these diverse threats requires a new approach to collecting and analyzing information.

1.1.2 The Convergence of Technology and the Dawn of Big Data

Information processing and intelligence capabilities are becoming democratized.

In addition to rapidly proliferating intelligence collection capabilities, the fourth age of intelligence coincided with the introduction of the term “big data.” Big data refers to high-volume, high-velocity data that is difficult to process, store, and analyze with traditional information architectures. It is thought that the term was first used in an August 1999 article in Communications of the ACM [16]. The McKinsey Global Institute calls big data “the next frontier for innovation, competition, and productivity” [17]. New technologies like crowdsourcing, data fusion, machine learning, and natural language processing are being used in commercial, civil, and military applications to improve the value of existing data sets and to derive a competitive advantage. A major shift is under way from technologies that simply store and archive data to those that process it—including real-time processing of multiple “streams” of information.

1.1.3 Multi-INT Tradecraft: Visualization, Statistics, and Spatiotemporal Analysis

Today, the most powerful computational techniques are being developed for business

intelligence, high-speed stock trading, and commercial retailing. These are analytic techniques—which intelligence professionals call their “tradecraft”—developed in tandem with the “big data” information explosion. They differ from legacy analysis techniques because they are visual, statistical, and spatial.

The emerging field of visual analytics is “the science of analytical reasoning facilitated by visual interactive interfaces” [20, p. 4]. It recognizes that humans are predisposed to recognize trends and patterns when they are presented using consistent and creative cognitive and perceptual techniques. Technological advances like high-resolution digital displays, powerful graphics cards and graphics processing units, and interactive visualization and human-machine interfaces have changed the way scientists and engineers analyze data. These methods include three-dimensional visualizations, clustering algorithms, data filtering techniques, and the use of color, shape, and motion to rapidly convey large volumes of information.

Next came the fusion of visualization techniques with statistical methods.

Analysts introduced methods for statistical storytelling, where mathematical functions are applied through a series of steps to describe interesting trends, eliminate infeasible alternatives, and discover anomalies so that decision makers can visualize and understand a complex decision space quickly and easily.

Geographic information systems (GISs) and the science of geoinformatics had been used since the late 1960s to display spatial information as maps and charts.

Increasingly, software tools like JMP, Tableau, GeoIQ, MapLarge, and ESRI ArcGIS have included advanced spatial and temporal analysis tools that advance the science of data analysis. The ability to analyze trends and patterns over space and time is called spatiotemporal analysis.

1.1.4 The Need for a New Methodology

The fourth age of intelligence is characterized by the changing nature of threats, the convergence in information technology, and the availability of multi-INT analytic tools—three drivers that create the conditions necessary for a revolution in intelligence tradecraft. This class of methods must address nonstate actors, leverage technological advances, and shift the focus of intelligence from reporting the past to anticipating the future. We refer to this revolution as ABI, a method that former RAND analyst and National Intelligence Council Greg Treverton chairman has called the most important intelligence analytic method coming out of the wars in Iraq and Afghanistan.

1.2 Introducing ABI

Intelligence analysts deployed to Iraq and Afghanistan to hunt down terrorists found that traditional intelligence methods were ill-suited for the mission. The traditional intelligence cycle begins with the target in mind (Figure 1.3), but terrorists were usually indistinguishable from other people around them. The analysts—digital natives savvy in visual analytic tools—began by integrating already collected data in a geographic area. Often, the only common metadata between two data sets was time and location so they applied spatiotemporal analytic methods to develop trends and patterns from large, diverse data sets. These data sets described activities: events and transactions conducted by entities (people or vehicles) in an area.

Often, the only common metadata between two data sets was time and location so they applied spatiotemporal analytic methods to develop trends and patterns from large, diverse data sets. These data sets described activities: events and transactions conducted by entities (people or vehicles) in an area. Sometimes, the analysts would discover a series of unusual events that correlated across data sets. When integrated, it represented the pattern of life of an entity. The entity sometimes became a target. The subsequent collection and analysis on this entity, the resolution of identity, and the anticipation of future activities based on the pattern of life produced a new range of intelligence products that improved the effectiveness of the counterterrorism mission. This is how ABI got its name.

ABI is a new methodology—a series of analytic methods and enabling technologies—based on the following four empirically derived principles, which are distinct from traditional intelligence methods.

• Georeference to discover: Focusing on spatially and temporally correlating multi-INT data to discover key events, trends, and patterns.

• Data neutrality: Prizing all data, regardless of the source, for analysis.

• Sequence neutrality: Realizing that sometimes the answer arrives before you ask the question.

While various intelligence agencies, working groups, and government bodies have offered numerous definitions for ABI, we define it as “a set of spatiotemporal analytic methods to discover correlations, resolve unknowns, understand networks, develop knowledge, and drive collection using diverse multi-INT data sets.”

ABI’s most significant contribution to the fourth age of intelligence is a shift in focus of the intelligence process from reporting the known to discovery of the unknown.

• Integration before exploitation: Correlating data as early as possible, rather than relying on vetted, finished intelligence products, because seemingly insignificant events in a single INT may be important when integrated across multi-INT.

1.2.1 The Primacy of Location

When you think about it, everything and everybody has to be somewhere.

—The Honorable James R. Clapper1, 2004

The primacy of location is the central principle behind the new intelligence methodology ABI. Since everything happens somewhere, all activities, events, entities, and relationships have an inherent spatial and temporal component whether it is known a priori or not.

Hard problems cannot usually be solved with a single data set. The ability to reference multiple data sets across multiple intelligence domains— multi-INT—is a key enabler to resolve entities that lack a signature in any single domain of collection. In some cases, the only common metadata between two data sets is location and time— allowing for location-based correlation of the observations in each data set where the strengths of one compensate for the weaknesses in another.

…the tipping point for the fourth age and key breakthrough for the ABI revolution was the ability and impetus to integrate the concept of location into visual and statistical analysis of large, complex data sets. This was the key breakthrough for the revolution that we call ABI.

1.2.2 From Target-Based to Activity-Based

The paradigm of intelligence and intelligence analysis has changed, driven primarily by the shift in targets from the primacy of nation-states to transnational groups or irregular forces—Greg Treverton, RAND

A target can be a physical location like an airfield or a missile silo. Alternatively, it can be an electronic target, like a specific radio-frequency emission or a telephone number. Targets can be individuals, such as spies who you want to recruit. Targets might be objects like specific ships, trucks, or satellites. In the cyberdomain, a target might be an e-mail address, an Internet protocol (IP) address, or even a specific device. The target is the subject of the intelligence question. The linear cycle of planning and direction, collection, processing and exploitation, analysis and production, and dissemination begins and ends with the target in mind.

The term “activity-based” is the antithesis of the “target-based” intelligence model. This book describes methods and techniques for intelligence analysis when the target or the target’s characteristics are not known a priori. In ABI, the target is the output of a deductive analytic process that begins with unresolved, ambiguous entities and a data landscape dominated by events and transactions.

Targets in traditional intelligence are well-defined, predictable adversaries with a known doctrine. If the enemy has a known doctrine, all you have to do is steal the manual and decode it, and you know what they will do.

In the ABI approach, instead of scheduled collection, incidental collection must be used to gather many (possibly irrelevant) events, transactions, and observations across multiple domains. In contrast to the predictable, linear, inductive approach, analysts apply deductive reasoning to eliminate what the answer is not and narrow the problem space to feasible alternatives. When the target blends in with the surroundings, a durable, “sense-able” signature may not be discernable. Proxies for the entity, such as a communications device, a vehicle, a credit card, or a pattern of actions, are used to infer patterns of life from observations of activities and transactions.

Informal collaboration and information sharing evolved as geospatial analysis tools became more democratized and distributed. Analysts share their observations—layered as dots on a map—and tell spatial stories about entities, their activities, their transactions, and their networks.

While traditional intelligence has long implemented techniques for researching, monitoring, and searching, the primary focus of ABI methods is on discovery of the unknown, which represents the hardest class of intelligence problems.

1.2.3 Shifting the Focus to Discovery

All truths are easy to understand once they are discovered; the point is to discover them.

—Galileo Galilei

The lower left corner of Figure 1.4 represents known-knowns: monitoring. These are known locations or targets, and the focus of the analytic operation is to monitor them for change.

the targets, location, behaviors, and signatures are all known. The intelligence task is monitoring the location for change and alerting when there is activity.

The next quadrant of interest is in the upper left of Figure 1.4. Here, the behaviors and signatures are unknown, but the targets or locations are known.

The research task builds deep contextual analytic knowledge to enhance understanding of known locations and targets, which can then be used to identify more targets for monitoring and enhance the ability to provide warning.

The lower right quadrant of Figure 1.4, search, requires looking for a known signature/behavior in an unknown location.

Searching previously undiscovered areas for the new equipment is search. For obvious reasons, this laborious task is universally loathed by analysts.

The “new” function and the focus of ABI methods is the upper right. You don’t know what you’re looking for, and you don’t know where to find it. This has always been the hardest problem for intelligence analysts, and we characterize it as “new” only because the methods, tools, policies, and tradecraft have only recently evolved to the point where discovery is possible outside of simple serendipity.

Discovery is a data-driven process. Analysts, ideally without bias, explore data sets to detect anomalies, characterize patterns, investigate interesting threads, evaluate trends, eliminate the impossible, and formulate hypotheses.

Typically, analysts who excel at discovery are detectives. They exhibit unusual curiosity, creativity, and critical thinking skills. Generally, they tend to be rule breakers. They get bored easily when tasked in the other three quadrants. New tools are easy for them to use. Spatial thinking, statistical analysis, hypothesis generation, and simulation make sense. This new generation of analysts—largely comprised of millennials hired after 9/11— catalyzed the evolution of ABI methods because they were placed in an environment that required a different approach. Frankly, their lack of experience with the traditional intelligence process created an environment where something new and different was possible.

1.2.4 Discovery Versus Search

Are we saying that hunting terrorists is the same as house shopping? Of course not, but the processes have their similarities. Location (and spatial analysis) is central to the search, discovery, research, and monitoring process. Browsing metadata helps triage information and focus the results. The problem constantly changes as new entities appear or disappear. Resources are limited and it’s impossible to action every lead…

1.2.6 Summary: The Key Attributes of ABI

ABI is a new tradecraft, focused on discovering the unknown, that is well-suited for advanced multi-INT analysis of nontraditional threats in a “big data” environment.

1.3 Organization of this Textbook

This textbook is directed at entry-level intelligence professionals, practicing engineers, and research scientists familiar with general principles of intelligence and analysis. It takes a unique perspective on the emerging methods and techniques of ABI with a specific focus on spatiotemporal analytics and the associated technology enablers.

The seminal concept of “pattern of life” is introduced in Chapter 8. Chapter 8 exposes the nuances of “pattern of life” versus pattern analysis and describes how both concepts can be used to understand complex data and draw conclusions using the activities and transactions of entities. The final key concept, incidental collection, is the subject of Chapter 9. Incidental collection is a core mindset shift from target-based point collection to wide area activity-based surveillance.

A unique feature of this textbook is its focus on applications from the public domain.

1.4 Disclaimer About Sources and Methods

Protecting sources and methods is the most paramount and sacred duty of intelligence professionals. This central tenet will be carried throughout this book. The development of ABI was catalyzed by advances in commercial data management and analytics technology applied to unique sources of data. Practitioners deployed to the field have the benefit of on-the-job training and experience working with diverse and difficult data sets. A primary function of this textbook is to normalize understanding across the community and inform emerging intelligence professionals of the latest advances in data analysis and visual analytics.

All of the application examples in this textbook are derived from information entirely in the public domain. Some of these examples have corollaries to intelligence operations and intelligence functions. Some are merely interesting applications of the basic principles of ABI to other fields where multisource correlation, patterns of life, and anticipatory analytics are commonplace. Increasingly, commercial companies are using similar “big data analytics” to understand patterns, resolve unknowns, and anticipate what may happen.

1.6 Suggested Readings

Readers unfamiliar with intelligence analysis, the disciplines of intelligence, and the U.S. intelligence community are encouraged to review the following texts before delving deep into the world of ABI:

• Lowenthal, Mark M., Intelligence: From Secrets to Policy. Lowenthal’s legendary text is the premier introduction to the U.S. intelligence community, the primary principles of intelligence, and the intelligence relationship to policy. The frequently updated text has been expanded to include Lowenthal’s running commentary on various policy issues including the Obama administration, intelligence reform, and Wikileaks. Lowenthal, once the assistant director of analysis at the CIA and vice chairman of Evaluation for the National Intelligence Council, is the ideal intellectual mentor for an early intelligence professional.

• George, Roger Z., and James B. Bruce, Analyzing Intelligence: Origins, Obstacles, and Innovations. This excellent introductory text by two Georgetown University professors is the most comprehensive text on analysis currently in print. It provides an overview of analysis tradecraft and how analysis is used to produce intelligence, with a focus on all-source intelligence.

• Heuer, Richards J., The Psychology of Intelligence Analysis. This book is required reading for intelligence analysts and documents how analysts think. It introduces the method of analysis of competing hypotheses (ACH) and deductive reasoning, a core principle of ABI.

• Heuer, Richards J., and Randolph H. Pherson, Structured Analytic Techniques for Intelligence Analysis. An extension of Heuer’s previous work, this is an excellent handbook of techniques for all-source analysts. Their techniques pair well with the spatiotemporal analytic methods discussed in this text.

• Waltz, Edward, Quantitative Intelligence Analysis: Applied Analytic Models, Simulations, and Games. Waltz’s highly detailed book describes modern modeling techniques for intelligence analysis. It is an essential companion text to many of the analytic methods described in Chapters 12–16.

2

ABI History and Origins

Over the past 15 years, ABI has entered the intelligence vernacular. Former NGA Letitia Long, said it is “a new foundation for intelligence analysis, as basic and as important as photographic interpretation and imagery analysis became during World War II”

2.1 Wartime Beginnings

ABI methods have been compared to many other disciplines including submarine hunting and policing, but the modern concepts of ABI trace their roots to the Global War on Terror. According to Long, “Special operations led the development of GEOINT-based multi-INT fusion techniques on which ABI is founded”

2.2 OUSD(I) Studies and the Origin of the Term ABI

During the summer of 2008 the technical collection and analysis (TCA) branch within the OUSD(I) determined the need for a document defining “persistent surveillance” in support of irregular warfare. The initial concept was a “pamphlet” that would briefly define persistence and expose the reader to the various surveillance concepts that supported this persistence. U.S. Central Command, the combatant command with assigned responsibility throughout the Middle East, expressed interest in using the pamphlet as a training aid and as a means to get its components to use the same vocabulary.

ABI was formally defined by the now widely circulated “USD(I) definition”:

A discipline of intelligence, where the analysis and subsequent collection is focused on the activity and transactions associated with an entity, a population, or an area of interest

There are several key elements of this definition. First, OUSD(I) sought to define ABI as a separate discipline of intelligence like HUMINT or SIGINT: SIGINT is to the communications domain as activity-INT is to the human domain. Recognizing that the INTs are defined by an act of Congress, this definition was later softened into a “method” or “methodology.”

The definition recognizes that ABI is focused on activity (composed of events and transactions, further explored in Chapter 4) rather than a specific target. It introduces the term entity, but also recognizes that analysis of the human domain could include populations or areas, as recognized by the related study called “human geography.”

Finally, the definition makes note of analysis and subsequent collection, also sometimes referred to as analysis driving collection. This emphasizes the importance of analysis over collection—a dramatic shift from the traditional collection-focused mindset of the intelligence community. To underscore the shift in focus from targets to entities, the paper introduced the topic of “human domain analytics.”

2.3 Human Domain Analytics

Human domain analytics is the global understanding of anything associated with people. The human domain provides the context and understanding of the activities and transactions necessary to resolve entities in the ABI method.

• The first is biographical information, or “who they are.” This includes information directly associated with an individual.

• The second data type is activities, or “what they do.” This data category associates specific actions to an entity.

• The third data category is relational, or “who they know,” the entities’ family, friends, and associates.

• The final data category is contextual (metaknowledge), which is information about the context or the environment in which the entity is found.

Examples include most of the information found within the sociocultural/human terrain studies. Taken in total, these data categories support ABI analysts in the analysis of entities, identity resolution of unknown entities, and placing the entities actions in a social context.

2.5 ABI-Enabling Technology Accelerates

In December 2012, BAE Systems was awarded a multiyear $60-million contract to provide “ABI systems, tools, and support for mission priorities” under the agency’s total application services for enterprise requirements (TASER) contract [13]. While these technology developments would bring new data sources to analysts, they also created confusion as the tools became conflated with the analytical methodology they were designed around. The phrase “ABI tool” would be attached to M111 and its successor program awarded under TASER.

2.6 Evolution of the Terminology

The term ABI and the introduction of the four pillars was first mentioned to the unclassified community during an educational session hosted by the U.S. Geospatial Intelligence Foundation (USGIF) at the GEOINT Symposium in 2010, but the term was introduced broadly in comments by Director of National Intelligence (DNI) Clapper and NGA director Long in their remarks at the 2012 symposium [14, 15].

As wider intelligence community efforts to adapt ABI to multiple missions took shape, the definition of ABI became generalized and evolved to a broader perspective as shown in Table 2.1. NGA’s Gauthier described it as “a set of methods for discovering patterns of behavior by correlating activity data at network speed and enormous scale” [16, p. 1]. It was also colloquially described by Gauthier and Long as, “finding things that don’t want to be found.”

2.7 Summary

Long described ABI as “the most important intelligence methodology of the first quarter of the 21st century,” noting the convergence of cloud computing technology, advanced tracking algorithms, inexpensive data storage, and revolutionary tradecraft that drove adoption of the methods [1].

3

Discovering the Pillars of ABI

The basic principles of ABI have been categorized as four fundamental “pillars.” These simple but powerful principles were developed by practitioners by cross-fertilizing best practices from other disciplines and applying them to intelligence problems in the field. They have evolved and solidified over the past five years as a community of interest developed around the topic. This chapter describes the origin and practice of the four pillars: georeference to discover, data neutrality, sequence neutrality, and integration before exploitation.

3.1 The First Day of a Different War

The U.S. intelligence community and most of the broader U.S. and western national security apparatus, was created to fight—and is expertly tuned for—the bipolar, state-centric conflict of the Cold War. Large states with vast bureaucracies and militaries molded in their image dominated the geopolitical landscape.

3.2 Georeference to Discover: “Everything Happens Somewhere”

Georeference to discover is the foundational pillar of ABI. It was derived from the simplest of notions but proves that simple concepts have tremendous power in their application.

Where activity happens—the spatial component—is the one aspect of these diverse data that is (potentially) common. The advent of the global positioning system (GPS)—and perhaps most importantly for the commercial realm, the de-activation of a mode called “selective availability”—has made precisely capturing “where things happen” move from the realm of science fiction to the reality of day-to-day living. With technological advances, location has become knowable.

3.2.1 First-Degree Direct Georeference

The most straightforward of these is direct georeferencing, which is where machine-readable geospatial content in the form of a coordinate system or known cadastral system is present in the metadata of a type of information. An example of this is metadata (simply, “data about data”) of a photo a GPS-enabled handheld camera or cell phone, for example, might give a series of GPS coordinates in degrees-minutes-seconds format.

3.2.2 First-Degree Indirect Georeference

By contrast, indirect georeferencing contains spatial information in non-machine-readable content, not ready for ingestion into a GIS.

an example of a metadata-based georeference in the same context would be a biographical profile of John Smith with the metadata tag “RESIDENCE: NOME, ALASKA.”

3.2.3 Second-Degree Georeference

Further down the georeferencing rabbit hole is the concept of a second-degree georeference. This is a special case of georeferencing where the content and metadata contain no first-degree georeferences, but analysis of the data in its context can provide a georeference.

For example, a poem about a beautiful summer day might not contain any first-degree georeferences, as it describes only a generic location. By reconsidering the poem as the “event” of “poem composition, a georeference can be derived. Because the poet lived at a known location, and the date of the poem’s composition is also known, the “poem composition event” occurred at “the poet’s house” on “the date of composition,” creating a second-degree georeference for a poem [5].

The concept of second-degree georeferencing is how we solve the vexing problem of data that does not appear, at first glance, to be “georeferenceable.” The above example shows how, by deriving events from data, we can identify activity that is more easily georeferenceable. This is one of the strongest responses to critics of the ABI methodology who argue that much, if not most, data does not lend itself to the georeferencing and overall data- conditioning process.

3.3 Discover to Georeference Versus Georeference to Discover

It is also important to contrast the philosophy of georeference to discover with the more traditional mindset of discover to georeference. Discover to georeference is a concept often not given a name but aptly describes the more traditional approach to geographically referencing information. This traditional process, based on keyword, relational, or Boolean-type queries, is illustrated in Figure 3.2. Often, the georeferencing that occurs in this process is manual, done via copy-paste from free text documents accessible to analysts.

With discover to georeference, the first question that is asked, often unconsciously, is, “This is an interesting piece of information; I should find out where it happened.” It can also be described as “pin-mapping,” based on the process of placing pins in a map to describe events of interest. The key difference is the a priori decision that a given event is relevant or irrelevant before the process of georeferencing begins.

Using the pillar of georeference to discover, the act of georeferencing is an integral part of the act of processing data, through either first- or second-degree attributes. It is the first step of the ABI analytic process and begins before the analyst ever looks at the data.

The act of georeferencing creates an inherently spatial and temporal data environment in which ABI analysts spend the bulk of their time, identifying spatial and temporal co-occurrences and examining said co-occurrences to identify correlations. This environment naturally leads the analyst to seek more sources of data to improve correlations and subsequent discovery.

3.4 Data Neutrality: Seeding the Multi-INT Spatial Data Environment

Data neutrality is the premise that all data may be relevant regardless of the source from which it was obtained. This is perhaps the most overlooked of the pillars of ABI because it is so simple as to be obvious. Some may dismiss this pillar as not important to the overall process of ABI, but it is central to the need to break down the cultural and institutional barriers between INT-specific “stovepipes” and consider all possible sources for understanding entities and their activities.

as the pillars were being developed, the practitioners who helped to write much of ABI’s lexicon spoke of data neutrality as a goal instead of a consequence. The importance of this distinction will be explored below, as it relates to the first pillar of georeference to discover.

Imagine again you are the analyst described in the prior section. In front of you is a spatial data environment in your GIS consisting of data obtained from many different sources of information, everything from reports from the lowest level of foot patrols to data collected from exquisite national assets. This data is represented as vectors: dots and lines (events and transactions) on your map. As you begin to correlate data via spatial and temporal attributes, you realize that data is data, and no one data source is necessarily favored over the others. The second pillar of ABI serves to then reinforce the importance of the first and naturally follows as a logical consequence.

Given that the act of data correlation is a core function of ABI, the conclusion that there can never be “too much” data is inevitable. “Too much,” in the inexact terms of an analyst, often means “more than I have the time, inclination, or capacity to understand,” but more often than that means “data that is not in a format conducive to examination in a single environment.” This becomes an important feature in understanding the data discovery mindset.

As the density of data increases, the necessity for smart technology for attribute correlation becomes a key component of the technical aspects of ABI. This challenge is exacerbated by the fact that some data sources include inherent uncertainty and must be represented by fuzzy boundaries, confidence bands, spatial polygons, ellipses, or circles representing circular error probability (CEP).

The spatial and temporal environment provides two of the three primary data filters for the ABI methodology: correlation on location and correlation on attributes. Attribute-based correlation becomes important to rule out false-positive correlations that have occurred solely based on space and time.

the nature of many data sources almost always requires human judgment regarding correlation across multiple domains or sources of information. Machine learning continues to struggle with these especially as it is difficult to describe the intangible context in which potential correlations occur.

Part of the importance of the data neutrality mindset is realizing the unique perspective that analysts bring to data analysis; moreover, this perspective cannot be easily realized in one type of analyst but is at its core the product of different perspectives collaborating on a similar problem set. This syncretic approach to analysis was central to the revolution of ABI, with technical analysts from two distinct intelligence disciplines collaborating and bringing their unique perspectives to their counterparts’ data sets.

3.5 Integration Before Exploitation: From Correlation to Discovery

The traditional intelligence cycle is a process often referred to as tasking, collection, processing, exploitation, and dissemination (TCPED).

TCPED is a familiar concept to intelligence professionals working in various technical disciplines who are responsible for making sense out of data in domains such as SIGINT and IMINT. Although often depicted as a cycle as shown in Figure 3.4, the process is also described as linear.

From a philosophical standpoint, TCPED makes several key assumptions:

• The ability to collect data is the scarcest resource, which implies that tasking is the most critical part of the data exploitation process. The first step of the process begins with tasking against a target, which assumes the target is known a priori.

• The most efficient way to derive knowledge in a single domain is through focused analysis of data, generally to the exclusion of specific contextual data.

• All data that is collected should be exploited and disseminated.

The limiting factor for CORONA missions was the number of images that could be taken by the satellite. In this model, tasking becomes supremely important: There are many more potential targets that can be imaged on a single roll of film. However, since satellite imaging in the CORONA era was a constrained exercise, processes were put in place to vet, validate, and rank-order tasking through an elaborate bureaucracy.

The other reality of phased exploitation is that it was a product of an adversary with signature and doctrine that, while not necessarily known, could be deduced or inferred over repeated observations. Large, conventional, doctrine-driven adversaries like the Soviet Union not only had large signatures, but their observable activities played out over a time scale that was easily captured by infrequent, scheduled revisit with satellites like CORONA. Although they developed advanced denial and deception techniques employed against imaging systems, both airborne and national, their large, observable activities were hard to hide.

But where is integration in this process? There is no “I,” big or small, in TCPED. Rather, integration was a subsequent step conducted very often by completely different analysts.

In today’s era of reduced observable signatures, fleeting enemies, and rapidly changing threat environments, integration after exploitation is seldom timely enough to provide decision advantage. The traditional concept of integration after exploitation, where finished reporting is only released when it exceeds the single-INT reporting threshold is shown in Figure 3.6. This approach not only suffers from a lack of timeliness but also is limited by the fact that only information deemed significant within a single-INT domain (without the contextual information provided by other INTs) is available for integration. For this reason, the single-INT workflows are often derisively referred to by intelligence professionals as “stovepipes” or as “stovepiped exploitation”.

While “raw” is a loaded term with specific meanings in certain disciplines and collection modalities, the theory is the same: The data you find yourself georeferencing, from any source you can get your hands on, is data that very often, has not made it into the formal intelligence report preparation and dissemination process. It is a very different kind of data, one for which the existing processes of TCPED and the intelligence cycle are inexactly tuned. Much of this information is well below the single-INT reporting threshold in Figure 3.6, but data neutrality tells us that while the individual pieces of information may not exceed the domain thresholds, the combined value of several pieces in an integrated review may not only exceed reporting thresholds but could reveal unique insight to a problem that would be otherwise undiscoverable to the analyst.

TCPED is a dated concept because of its inherent emphasis on the tasking and collection functions. The mindset that collection is a limited commodity influences and biases the gathering of information by requiring such analysts to decide a priori what is important. This is inconsistent with the goals of the ABI methodology. Instead, ABI offers a paradigm more suited to a world in which data has become not a scarcity, but a commodity: the relative de-emphasis of tasking collection versus a new emphasis on the tasking of analysis and exploitation

The result of being awash in data is that no longer can manual exploitation processes scale. New advances in collection systems like the constellation of small satellites proposed by Google’s Skybox will offer far more data than even a legion of trained imagery analysts could possibly exploit. There are several solutions to this problem of “drowning in data”:

• Collect less data (or perhaps, less irrelevant data and more relevant data);

• Integrate data earlier, using correlations to guide labor-intensive exploitation processes;

• Use smart technology to move techniques traditionally deemed “exploitation” into the “processing” stage.

These three solutions are not mutually exclusive, though note that the first two represent philosophically divergent viewpoints on the problem of data. ABI naturally chooses both the second and third solution. In fact, ABI is one of a small handful of approaches that actually becomes far more powerful as the represented data volume of activity increases because of the increased probability of correlations.

The analytic process emphasis in ABI also bears resemblance to the structured geospatial analytic method (SGAM), first posited by researchers at Penn State University

Foraging, then, is not only a process that analysts use but also a type of attitude that seeks to be embedded in the analytical mindset: The process of foraging is a continual one spanning not only specific lines of inquiry but also evolves beyond the boundaries of specific questions, turning the “foraging process” into a consistent quest for more data.

Another implication is precisely where in the data acquisition chain an ABI analyst should ideally be placed. Rather than putting integrated analysis at the end of the TCPED process, this concept argues for placing the analyst as close to the data collection point (or point of operational integration) as possible. While this differs greatly for tactical missions versus strategic missions, the result of placing the analyst as close to the data acquisition and processing components is clear: The analyst has additional opportunities not only to acquire new data but affect the acquisition and processing of data from the ground up, making more data available to the entire enterprise through his or her individual efforts.

3.6 Sequence Neutrality: Temporal Implications for Data Correlation

Sequence neutrality is perhaps the least understood and most complex of the pillars of ABI. The first three pillars are generally easily understood after a sentence or two of explanation (though they have deeper implications for the analytical process as we continually explore their meaning). Sequence neutrality, on the other hand, forces us to consider—and in many ways, reconsider—the implications of temporality with regard to causality and causal reasoning. As ABI moves data analysis to a world governed by correlation rather than causation, the specter of causation must be addressed.

In epistemology, this concept is described as narrative fallacy. Naseem Taleb, in his 2007 work Black Swan, explains it as “[addressing] our limited ability to look at sequences of facts without weaving an explanation into them, or, equivalently, forcing a logical link, an arrow of relationship upon them. Explanations bind facts together. They make them all the more easily remembered; they help them make more sense” [12]. What is important in Taleb’s statement is the concept of sequence: Events occur in order, and we weave a logical relationship around them.

As events happen in sequence, we chain them together even given our limited perspective on the accuracy with which those events represent reality. When assessing patterns—true patterns, not correlations—in single-source data sets, time proves to be a useful filter presuming that the percentage of the “full” data set represented remains relatively consistent. As we introduce additional datasets, the potential gaps multiply causing uncertainty to exponentially increase. In intelligence, as many data sets are acquired in an adversarial rather than cooperative fashion (as opposed to in traditional civil GIS approaches, or even crime mapping approaches), this concept becomes so important that it is given a name: sparse data.

You are integrating the data well before stovepiped exploitation and have created a data-neutral environment in which you can ask complex questions of the data. This enables and illuminates a key concept of sequence neutrality: The data itself drives the kinds of questions that you ask. In this way, we express a key component of sequence neutrality as “understanding that we have the answers to many questions we do not yet know to ask.”

The corollary to this realization is the importance of forensic correlation versus linear-forward correlation. If we have the answers to many questions in our spatial-temporal data environment, it then follows logically that the first place to search for answers—to search for correlations—is in the data environment we have already created. Since the data environment is based on what has already been collected, the resultant approach is necessarily forensic. Look backward, before looking forward.

From card catalogs and libraries we have moved to search algorithms and metadata, allowing us as analysts to quickly and efficiently employ a forensic, research-based approach to seeking correlations.

As software platforms evolved, more intuitive time-based filtering was employed, allowing analysts to easily “scroll through time.” As with many technological developments, however, there was also a less obvious downside related to narrative fallacy and event sequencing: The time slider allowed analysts to see temporally referenced data occur in sequence, reinforcing the belief that because certain events happened after other events, they may have been caused by them. It also made it easy to temporally search for patterns in data sets: useful again in single data sets, but potentially highly misleading in multisourced data sets due to the previously discussed sparse data problem. Sequence neutrality, then, is not only an expression of the forensic mindset but a statement of warning to the analyst to consider the value of sequenced versus nonsequenced approaches to analysis. Humans have an intuitive bias to see causality when there is only correlation. We caution against use of advanced analytic tools without the proper training and mindset adjustment.

3.6.1 Sequence Neutrality’s Focus on Metadata: Section 215 and the Bulk Telephony Metadata Program Under the USA Patriot Act

By positing that all data represents answers to certain questions, it implores us to collect and preserve the maximum amount of data as possible, limited only by storage space and cost. It also begs the creation of indexes within supermassive data sets, allowing us to zero in on key attributes of data that may only represent a fraction of the total data size.

A controversial provision of the USA PATRIOT act, Section 215, allows the director of the Federal Bureau of Investigation (or designee) to seek access to “certain business records” which may include “any tangible things (including books, records, papers, documents, and other items) for an investigation to protect against international terrorism or clandestine intelligence activities, provided that such investigation of a United States person is not conducted sole upon the basis of activities protected by the first amendment to the Constitution”.

3.7 After Next: From Pillars, to Concepts, to Practical Applications

The pillars of ABI represent the core concepts, as derived by the first practitioners of ABI. Rather than a framework invented in a classroom, the pillars were based on the actual experiences of analysts in the field, working with real data against real mission sets. It was in this environment, forged by the demands of asymmetric warfare and enabled by accelerating technology, in which ABI emerged as one of the first examples of data-driven intelligence analysis approaches, focused primarily on spatial and temporal correlation as a means to discover.

The foraging-sensemaking, data-centric, sequence-neutral analysis paradigm of ABI conflicts with the linear- forward TCPED-centric approaches used in “tipping-cueing” constructs. The tip/cue concept slews (moves) sensors to observed or predicted activity based on forecasted sensor collection, accelerating warning on known knowns. This concept ignores the wealth of known data about the world in favor of simple additive constructs that if not carefully understood, risk biasing analysts with predetermined conclusions from arrayed collection systems

While some traditional practitioners are uncomfortable with the prospect of “releasing unfinished intelligence,” the ABI paradigm—awash in data—leverages the power of “everything happens somewhere” to discover the unknown. As a corollary, when many things happen in the same place and time, this is generally an indication of activity of interest. Correlation across multiple data sources improves our confidence in true positives and eliminates false positives.

4

The Lexicon of ABI

The development of ABI also included the development of a unique lexicon, terminology, and ontology to accompany it.

Activity data “comprises physical actions, behaviors, and information received about entities. The focus of analysis in ABI, activity is the overarching term used for ‘what entities do.’ Activity can be subdivided into two types based on its accompanying metadata and analytical use: events and transactions”.

4.1 Ontology for ABI

One of the challenges of intelligence approaches for the data-rich world that we now live in is integration of data.

As the diversity of data increased, analysts were confronted with the problem that most human analysts deal with today: How does one represent diverse data in a common way?

An ontology is the formal naming and documentation of interrelationships between concepts and terms in a discipline. Established fields like biology and telecommunications have well-established standards and ontologies. As the diversity of data and the scope of a discipline increases, so does the complexity of the ontology. If the ontology becomes too rigid and requires too many committee approvals to adapt to change, it cannot easily account for new data types that emerge as technology advances.

Moreover, with complex ontologies for data, complex environments are required for analysis, and it becomes extraordinarily difficult to correlate and connect data (to say nothing of conclusions derived from data correlations) in any environment other than a pen-to-paper notebook or a person’s mind.

4.2 Activity Data: “Things People Do”

The first core concept that “activity” data reinforces is the idea that ABI is ultimately about people, which, in ABI, we primarily refer to as “entities.”

Activity in ABI is information relating to “things people do.” While this is perhaps a simplistic explanation, it is important to the role of ABI. In ABI parlance, activities are not about places or equipment or objects.

4.2.1 “Activity” Versus “Activities”

The vernacular and book title use the term “activity-based intelligence,” but in early discussions, the phrase was “activities-based intelligence.” Activities are the differentiated, atomic, individual activities of entities (people). Activity is a broad construct to describe aggregated activities over space and time.

4.2.2 Events and Transactions

The definition in the introduction to this chapter defined activity data as “physical actions, behaviors, and information received about entities” but also divided activity data into two categories: events and transactions. These types are distinguished based on their metadata and utility for analysis. To limit the scope of the ABI ontology (translation: to avoid making an ontology that describes every possible action that could be performed by every possible type of entity), we specifically categorize all activity data into either an event or transaction based on the metadata that accompanies the data of interest.

a person living in a residence—provides a very different kind of event, one that is far less specific. While a residential address or location can also be considered biographical data, the fact of a person living in a specific place is treated as an event because of its spatial metadata component.

In all three examples, spatial metadata is the most important component.

The concept of analyzing georeferenced events is not specific to military or intelligence analysis. The GDELT project maintains a 100% free and open database of 300 kinds of events using data in over 100 languages with daily updates from January 1, 1979, to the present. The database contains over 400 million georeferenced data points

Characterization is an important concept because it can sometimes appear as if we are using events as a type of context. In this way, activities can characterize other activities. This is important because most activity conducted by entities does not occur in a vacuum; it occurs simultaneously with activities conducted by different entities that occur in either the same place or time—and sometimes both.

Events that occur in close proximity provide us an indirect way to relate entities together based on individual data points. There is, however, a more direct way to relate entities together through the second type of activity data: transactions.

4.2.3 Transactions: Temporal Registration

Transactions in ABI provide us with our first form of data that directly relates entities. A transaction is defined as “an exchange of information between entities (through the observation of proxies) and has a finite beginning and end”. This exchange of information is essentially the instantaneous expression of a relationship between two entities. This relationship can take many forms, but it exists for at least the duration of the transaction.

Transactions are of supreme importance in ABI because they represent relationships between entities. Transactions are typically observed between proxies, or representations of entities, and are therefore indirect representations of the entities themselves.

For example, police performing a stakeout of a suspect’s home may not observe the entity of interest, but they may follow his or her vehicle. The vehicle is a proxy. The departure from the home is an event. The origin-destination motion of the vehicle is a transaction. Analysts use transactions to connect entities and locations together, depending on the type of transaction.

Transactions come in two major subtypes: physical transactions and logical transactions. Physical transactions are exchanges that occur primarily in physical space, or, in other words, the real world.

Logical transactions represent the other major subtype of transaction. These types of transactions are easier to join directly to proxies for entities (and therefore, the entities themselves) because the actual transaction occurs in cyberspace as opposed to physical space.

4.2.4 Event or Transaction? The Answer is (Sometimes) Yes

Defining data as either an event or transaction is as much a function of understanding its role in the analytical process as much as it is about recognizing present metadata fields and “binning” it into one of two large groups. Consequently, there are certain data types that can be treated as both events and transactions depending on the circumstances and analytical use.

4.3 Contextual Data: Providing the Backdrop to Understand Activity

One of the important points to understand with regard to activity data is that its full meaning is often unintelligible without understanding the context in which observed activity is occurring.

“Contextualization is crucial in transforming senseless data into real information”

Activity data in ABI is the same: To understand it fully, we must understand the context in which it occurs, and context is a kind of data all unto itself.

There are many different kinds of contextual data.

Activity data and contextual data help understand the nature of events and transactions—and sometimes even to anticipate what might happen.

4.4 Biographical Data: Attributes of Entities

Biographical data provides information about an entity: name, age, date of birth, and other similar attributes. Because ABI is as much about entities as it is about activity, considering the types of data that apply specifically to entities is extremely important. Biographical data provides analysts with context to understand the meaning of activity conducted between entities.

the process of entity resolution (fundamentally, disambiguation) enables us to understand additional biographical information about entities.

Police departments, intelligence agencies, and even private organizations have long desired to understand specific details about individuals; therefore, what is it that makes ABI a fundamentally different analytic methodology? The answer is in the relationship of this biographical data to events and transactions described in Sections 4.2.2–4.2.4 and the fusion of different data types across the ABI ontology at speed and scale.

Unlike in more traditional approaches, wherein analysts might start with an individual of interest and attempt to “fill out” the baseball card, ABI starts with the events and transactions (activity) of many entities, ultimately attempting to narrow down to specific individuals of interest. This is one of the techniques that ABI uses to conquer the problem of unknown individuals in a network, which guards against the possibility that the most important entities might be ones that are completely unknown to individual analysts.

The final piece of the “puzzle” of ABI’s data ontology is relating entities to each other—but unlike transactions, we begin to understand generalized links and macronetworks. Fundamentally, this is relational data.

4.5 Relational Data: Networks of Entities

Entities do not exist in vacuums.

considering the context of relationships between entities is also of extreme importance in ABI. Relational data tells us about the entity’s relationships to other entities, through formal and informal institutions, social networks, and other means.

Initially, it is difficult to differentiate relational data from transaction data. Both data types are fundamentally about relating entities together; what, therefore, is the difference between the two?

The answer is that one type— transactions—represents specific expressions of a relationship, while the other type— relational data—is the generalized data based on both biographical data and activity data relevant to specific entities.

The importance of understanding general relationships between entities cannot be overstated; it is one of several effective ways to contextualize specific expressions of relationships in the form of transactions. Traditionally, this process would be to simply use specific data to form general conclusions (an inductive process, explored in Chapter 5). In ABI, however, deductive and abductive processes are preferred (whereby the general informs our evaluation of the specific). In the context of events and transactions, our understanding of the relational networks pertinent to two or more entities can help us determine whether connections between events and transactions are the product of mere coincidence (or density of activity in a given environment) or the product of a relationship between individuals or networks.

SNA can be an important complementary approach to ABI, but each focuses on different aspects of data and seeks a fundamentally different outcome, indicating that the two are not duplicative approaches.

What ABI and SNA share, however, is an appreciation for the importance of understanding entities and relationships as a method for answering particular types of questions.

4.6 Analytical and Technological Implications

Relational and biographical information regarding entities is supremely important for contextualizing events and transactions, but unlike earlier approaches to analysis and traditional manhunting, focusing on specific entities from the outset is not the hallmark innovation of ABI.

5

Analytical Methods and ABI

Over the past five years, the intelligence community and the analytic corps have adopted the term ABI and ABI- like principles into their analytic workflows. While the methods have easily been adapted by those new to the field —especially those “digital natives” with strong analytic credentials from their everyday lives—traditionalists have been confused about the nature of this intelligence revolution.

5.1 Revisiting the Modern Intelligence Framework

John Hollister Hedley, a long-serving CIA officer and editor of the President’s Daily Brief (PDB) outlines three broad categories of intelligence: 1) strategic or “estimative” intelligence; 2) current intelligence, and 3) basic intelligence

“finished” intelligence continues to be the frame around which much of today’s intelligence literature is constructed.

our existing intelligence framework needs expansion to account for ABI and other methodologies sharing similar intellectual approaches.

5.2 The Case for Discovery

In an increasingly data-driven world, the possibility of analytical methods that do not square with our existing categories of intelligence seems inevitable. The authors argue that ABI is the first of potentially many methods that belong in this category, which can be broadly labeled as “discovery,” sitting equally alongside current intelligence, strategic intelligence, and basic intelligence.

What characterizes discovery? Most intelligence analysts, many of whom are naturally inquisitive, already conduct aspects of discovery instinctively as they go about their day-to-day jobs. But there has been a growing chorus of concerns from both the analytical community and IC leadership that intelligence production has become increasingly driven by specific tasks and questions posed by policymakers and warfighters. In part, this is understandable: If policymakers and warfighters are the two major customer sets served by intelligence agencies, then it is natural for these agencies to be responsive to the perceived or articulated needs of those customers. However, need responsiveness does not encompass the potential to identify correlations and issues previously unknown or poorly understood by consumers of intelligence production. This is where discovery comes in: the category of intelligence primarily focused on identifying relevant and previously unknown potential information to provide decision advantage in the absence of specific requirements to do so.

institutional innovation often assumes (implicitly) a desire to innovate equally distributed across a given employee population. This egalitarian model of innovation, however, is belied by actual research showing that creativity is more concentrated in certain segments of the population

If “discovery” in intelligence is similar to “innovation” in technology, one consequence is that the desire to perform—and success at performing— “discovery” is unequally distributed across the population of intelligence analysts, and that different analysts will want to (and be able to) spend different amounts of time on “discovery.” Innovation is about finding new things based on a broad understanding of needs but lacking specific subtasks or requirements

ABI is one set of methods under the broad heading of discovery, but other methods—some familiar to the world of big data—also fit in the heading. ABI’s focus on spatial and temporal correlation for entity resolution through disambiguation is a specific set of methods designed for the specific problem of human networks

data neutrality’s application puts information gathered from open sources and social media up against information collected from clandestine and technical means. Rather than biasing analysis in favor of traditional sources of intelligence data, social media data is brought into the fold without establishing a separate exploitation workflow.

One of the criticisms of the Director of National Intelligence (DNI) Open Source Center, and the creation of OSINT as another domain of intelligence, was that it effectively served to create another stovepipe within the intelligence world,

ABI’s successes came from partnering, not replacing, single-INT analysts in battlefield tactical operations centers (TOCs).

The all-source analysis field is more typically (though not always) focused on higher-order judgments and adversary intentions; it effectively operates at a level of abstraction above both ABI and single-INT exploitation.

This is most evident in approaches to strategic issues dealing with state actors; all-source analysis seeks to provide a comprehensive understanding of current issues enabling intelligent forecasting of future events, while ABI focuses on entity resolution through disambiguation (using identical methodological approaches found on the counterinsurgency/counterterrorism battlefield) relevant to the very same state actors.

5.4 Decomposing an Intelligence Problem for ABI

One of the critical aspects of properly applying ABI is about asking the “right” questions. In essence, the challenge is to decompose a high-level intelligence problem into a series of subproblems, often posed as questions, that can potentially be answered using ABI methods.

As ABI focuses on disambiguation of entities, the problem must be decomposed to a level where disambiguation of particular entities helps fill intelligence gaps relating to the near-peer state power. As subproblems are identified, approaches or methods to address the specific subproblems are aligned to each subproblem in turn, creating an overall approach for tackling the larger intelligence problem. In this case, ABI does not become directly applicable to the overall intelligence problem until the subproblem specifically dealing with the pattern of life of a group of entities is extracted from the larger problem.

Another example problem to which ABI would be applicable is identifying unknown entities outside of formal leadership structures who may be key influencers outside of the given hierarchy through analyzing entities present at a location known to be associated with high-level leadership of the near-peer state.

5.5 The W3 Approaches: Locations Connected Through People and People Connected Through Locations

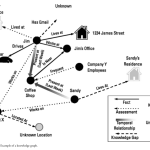

Once immersed in a multi-INT spatial data environment, there are two major approaches used in ABI to establish network knowledge and connect entities . These two approaches are summarized below, both dealing with connecting entities and locations. Together they are known as “W3” approaches, combining “who” and “where” to extend analyst knowledge of social and physical networks.

5.5.1 Relating Entities Through Common Locations

This approach focuses on connecting entities based on presence at common locations. Analysis begins with a known entity and then moves to identifying other entities present at the same location.

The process for evaluating strength of relationship based on locational proximity and type of location relies on the concepts of durability and discreteness, a concept further explored in Chapter 7. Colloquially, this process is known as “who-where-who,” and it is primarily focused on building out logical networks.

A perfect example of building out logical networks through locations begins with two entities—people, unique individuals—observed at a private residence on multiple occasions. In a spatial data environment, the presence of two entities at the same location at multiple points in time might bear investigation into the various attributes of those entities. The research process initially might show no apparent connection between them, but by continuing to understand various aspects of the entities, the locational connection may be corroborated and “confirmed” via the respective attributes of the entities. This could take many forms, including common social contacts, family members, and employers.

The easiest way to step through “who-where-who” is through a series of four questions. These questions offer an analyst the ability to logically step through a potential relationship through the colocation of individual entities. The first question is: “What is the entity or group of entities of interest?” This is often expressed as a simple “who” in shorthand, but the focus here is in identifying a specific entity or group of entities that are of interest to the analyst. Note that while ABI’s origins are in counterterrorism and thus, the search for “hostile entities,” the entities of interest could also be neutral or friendly entities, depending on what kind of organization the analyst is a part of.

In practice, this phase will consist of using known entities of interest and examining locations where the entities have been present. This process can often lead to constructing a full “pattern of life” for one or more specific entities, but it can also be as simple as identifying locations where entities were located on one or more specific occasions

The first question is: “What is the entity or group of entities of interest?” This is often expressed as a simple “who” in shorthand, but the focus here is in identifying a specific entity or group of entities that are of interest to the analyst.

In practice, this phase will consist of using known entities of interest and examining locations where the entities have been present. This process can often lead to constructing a full “pattern of life” for one or more specific entities, but it can also be as simple as identifying locations where entities were located on one or more specific occasions

The second question is: “Where has this entity been observed?” At this point, focus is on the spatial-temporal data environment. The goal here is to establish various locations where the entity was present along with as precise a time as possible.

The third question is: “What other entities have also been observed at these locations?” This is perhaps the most important of the four questions in this process. Here, the goal is to identify entities co-occurring with the entity or entities of interest. The focus is on spatial co-occurrence, ideally over multiple locations. This intuitive point— more co-occurrences increases the likelihood of a true correlation—is present in the math used to describe a linear correlation function:

the characteristics of each location considered must be evaluated in order to separate out “chance co-occurrences” versus “demonstrative co-occurrences.” In addition, referring back to the pillar of sequence neutrality, it is vitally important to consider the potential for co-occurrences that are temporally separated. This often occurs when networks of entities change their membership but use consistent locations for their activities, as is the case with many clubs and societies.

The fourth and final question is: “Is locational proximity indicative of some kind of relationship between the initial entity and the discovered entity?”

the goal is to take an existing network of entities and identify additional entities that may have been partially known or completely unknown. The overwhelming majority of entities must interact with each other, particularly to achieve common goals, and this analytic technique helps identify entities that are related based on common locations before metadata or attribute- based explicit relationships.

5.5.2 Relating Locations Through Common Entities

This approach is the inverse of the previous approach and focuses on connecting locations based on the presence of common entities. By tracking entities to multiple locations, connections between locations can be revealed.

Where the previous process is focused on building out logical networks where entities are the nodes, this process focuses on building out either logical or physical networks where locations are the nodes. While at first this can seem less relevant to a methodology focused on understanding networks of entities, understanding the physical network of locations helps indirectly reveal information about entities who use physical locations for various means (nefarious and nonnefarious alike).

The first question asked in this process is, “What is the initial location or locations of interest?” This is the most deceptively difficult question to answer, because it involves bounding the initial area of interest.

The next question brings us back to entities: “What entities have been observed at this location?” Whether considering one or more locations, this is where specific entities can be identified or partially known entities can be identified for further research. This is one of the core differences between the two approaches, in that there is no explicit a priori assumption regarding the entities of interest. This question is where pure “entity discovery” occurs, as focusing on locations allows entities not discovered through traditional, relational searches to emerge as potentially relevant players in multiple networks of interest.

The third question is, “Where else have these entities been observed?” This is where a network of related locations is principally constructed. Based on the entities—or networks—discovered in the previous phase of research, the goal is now to associate additional, previously unknown locations based on common entities.

One of the principal uses of this information is to identify locations that share a common group of entities. In limited cases, this approach can be predictive, indicating locations that entities may be associated with even if they have not yet been observed at a given location.

The final question is thus, “Is the presence of common entities indicative of a relationship between locations?”

Discovering correlation between entities and locations is only the first step, as subsequently contextual information must be examined dispassionately to support or refute the hypothesis suggested by entity commonality.

At this point, the assessment aspect of both methods must be discussed. By separating what is “known” to be true versus what is “believed” to be true, analysts can attempt to provide maximum value to intelligence customers.

5.6 Assessments: What Is Known Versus What Is Believed

At the end of both methods is an assessment question: Has the process of moving from vast amounts of data to specific data about entities and locations provided correlations that demonstrate actual relationships between entities and/or locations?